The universe trends toward disorder. Something pushes back. We call it knowledge, and we have no theory of how it works.

What if knowledge isn’t a thing we hold in our heads, but a physical force that holds civilization together?

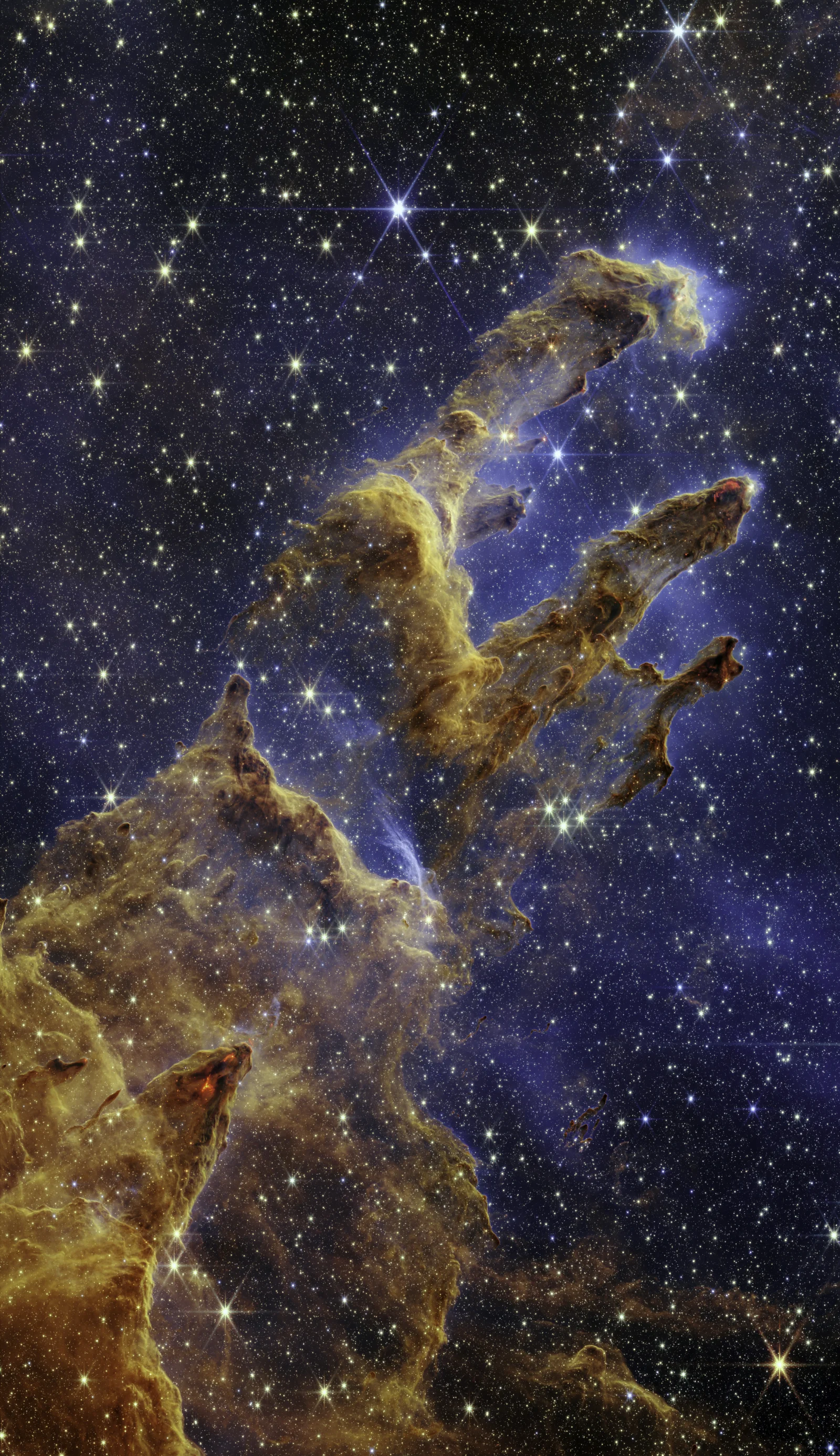

Stellar nurseries inside the Eagle Nebula — columns of gas and dust where stars are born and will eventually die. The universe makes beauty on its way to disorder. (NASA/ESA/CSA/STScI)

There is a fact about the universe that should unsettle you more than it does.

Left to itself, everything falls apart. Iron rusts. Buildings crumble. Stars burn out. The second law of thermodynamics is the deepest asymmetry in physics, the reason time has a direction, the reason there is a difference between a cup shattering on the floor and the shards leaping back into your hand. The universe trends, inexorably, toward maximum disorder.

And yet. Here you are. Reading this. A temporary arrangement of atoms so improbably organized that you can contemplate the very laws guaranteeing your eventual disassembly. Around you, a civilization of eight billion similarly improbable arrangements, living inside structures of glass and steel, transmitting symbols through fiber optic cables at the speed of light.

How? What is the force that pushes back against the dark?

The answer turns out to be the same in every domain — physics, biology, economics, philosophy — and it is not a metaphor. It is the thing we named in a previous piece: knowledge. But naming it was the easy part. The hard part is seeing what it actually does, how it accumulates, why it sometimes disappears, and why its continued growth may be the most consequential process on Earth.

3.5 billion years: the molecule

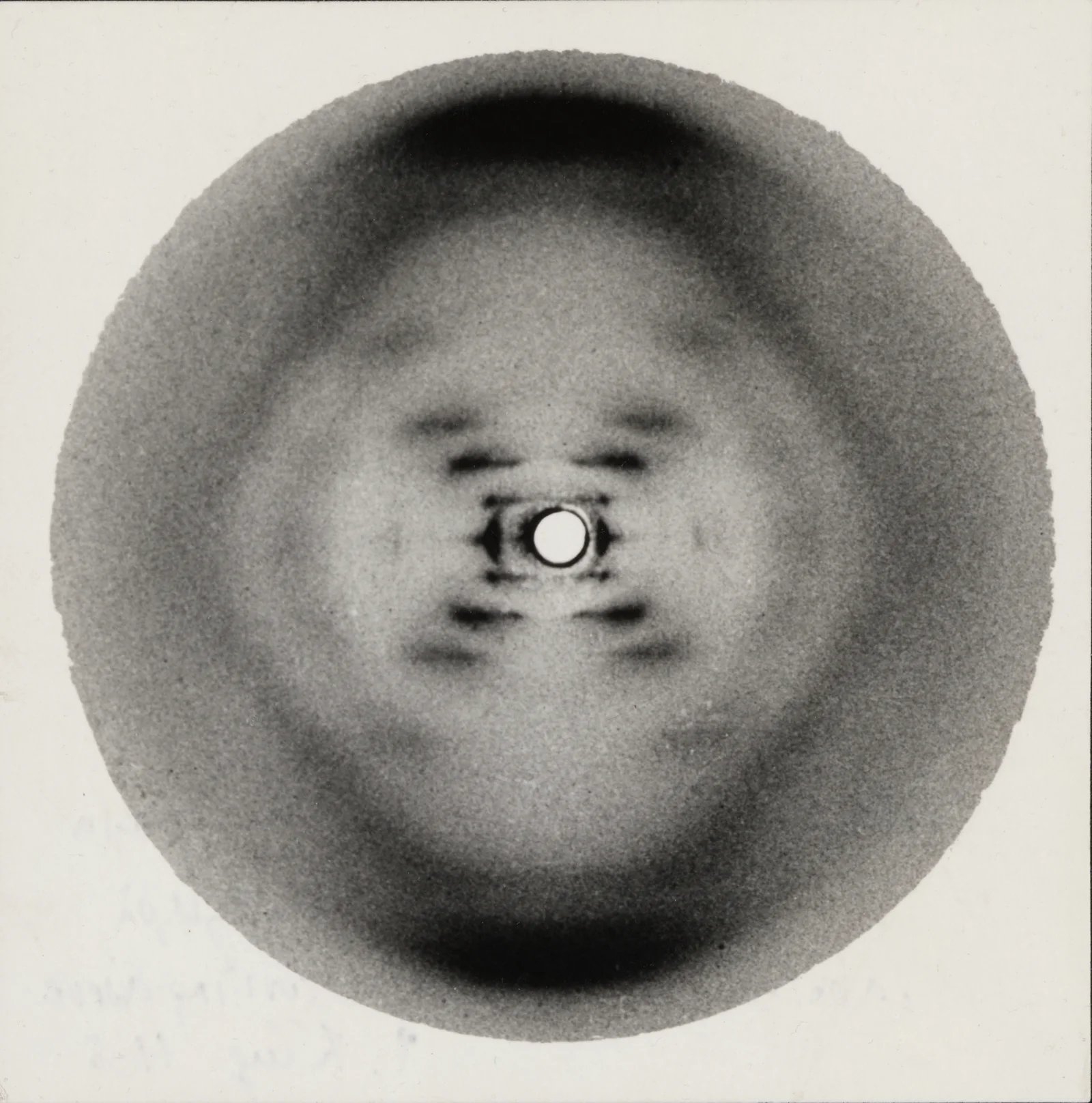

The image that confirmed Schrödinger’s prediction. Fifty-one X-ray exposures of crystallized DNA, and the one that changed biology forever. (King’s College London)

Erwin Schrödinger asked the right question in 1944: how does a living organism avoid the decay that the second law demands of everything else? His answer: organisms maintain themselves by “continually sucking orderliness from their environment,” by feeding on negative entropy. They take in structured energy, use it to maintain their internal order, and export disorder to their surroundings. Life doesn’t violate the second law. It plays a local game against it.

Schrödinger predicted that hereditary information would be stored in an “aperiodic crystal” — a structure with a regular backbone and an irregular, information-carrying sequence. Nine years before Watson and Crick, he had described DNA.

DNA is knowledge in the most literal sense: 3.5 billion years of accumulated information about environments, encoded in molecular structure, capable of causing transformations (building organisms) that would be astronomically improbable without it. Knowledge is information whose activation produces changes that the universe’s default trajectory toward disorder would never produce on its own. A genome is knowledge. So is a recipe for penicillin, a theory of orbital mechanics, and a ventilation system built by termites that don’t understand ventilation.

Cathedral-scale engineering by organisms with no comprehension of what they’ve built. The knowledge is in the structure, not in any termite’s mind. (Wikimedia Commons)

That last example matters. Daniel Dennett calls it “competence without comprehension” — functional design without a designer, knowledge without a knower. Darwin showed how: blind variation and selective retention, given sufficient time, can accumulate functional complexity that no mind ever conceived. Evolution is an information-processing algorithm that writes its results into the genome. Knowledge doesn’t require consciousness. It requires physical instantiation and the capacity to cause transformations.

Karl Popper formalized this with World 3: the world of objective knowledge — theories, mathematical structures, institutions — distinct from both the physical world and subjective experience. Einstein’s equations predicted gravitational waves decades before anyone could detect them. The knowledge was in the equations, waiting to be discovered, residing in no mind.

300 years: the constructor

David Deutsch, Popper’s most ambitious heir, asked what makes knowledge different from every other physical structure. His answer, developed in The Beginning of Infinity (2011) and formalized with Chiara Marletto in Constructor Theory, is this: knowledge is a constructor — information that, once physically instantiated, tends to cause itself to remain so.

DNA causes its environment to build another organism, keeping itself physically instantiated. A scientific theory, once understood, persists through books and teaching while enabling people to perform transformations. A constructor is anything that can cause a transformation and retain the ability to cause it again: a catalyst, an enzyme, a computer, a person with a skill.

The constructor-theoretic insight: almost all physically possible transformations require knowledge to occur. The things that happen spontaneously are a vanishingly small subset of what is physically possible. The difference between a universe of mere particles and one containing organisms, cities, and civilizations is knowledge. As Marletto put it, knowledge-containing entities are the only systems capable of sustaining transformations that would be astronomically improbable without them.

What distinguishes genuine knowledge from mere belief? Deutsch’s criterion: good explanations. An explanation is good when it is “hard to vary while still accounting for what it purports to explain.” The Greek myth explaining seasons through Demeter’s grief can be adjusted to fit anything. Earth’s axial tilt cannot be varied without destroying the explanation. The hard-to-vary criterion separates knowledge that persists (because it works) from stories that drift (because any version will do).

And unlike most physical structures, knowledge has a unique resilience: if embodied in multiple instances, it survives the destruction of any one. A book can burn. But if the knowledge in it exists in other books, other minds, other institutions, it endures.

Unless the network carrying it gets too small.

10,000 years ago: the disappearance

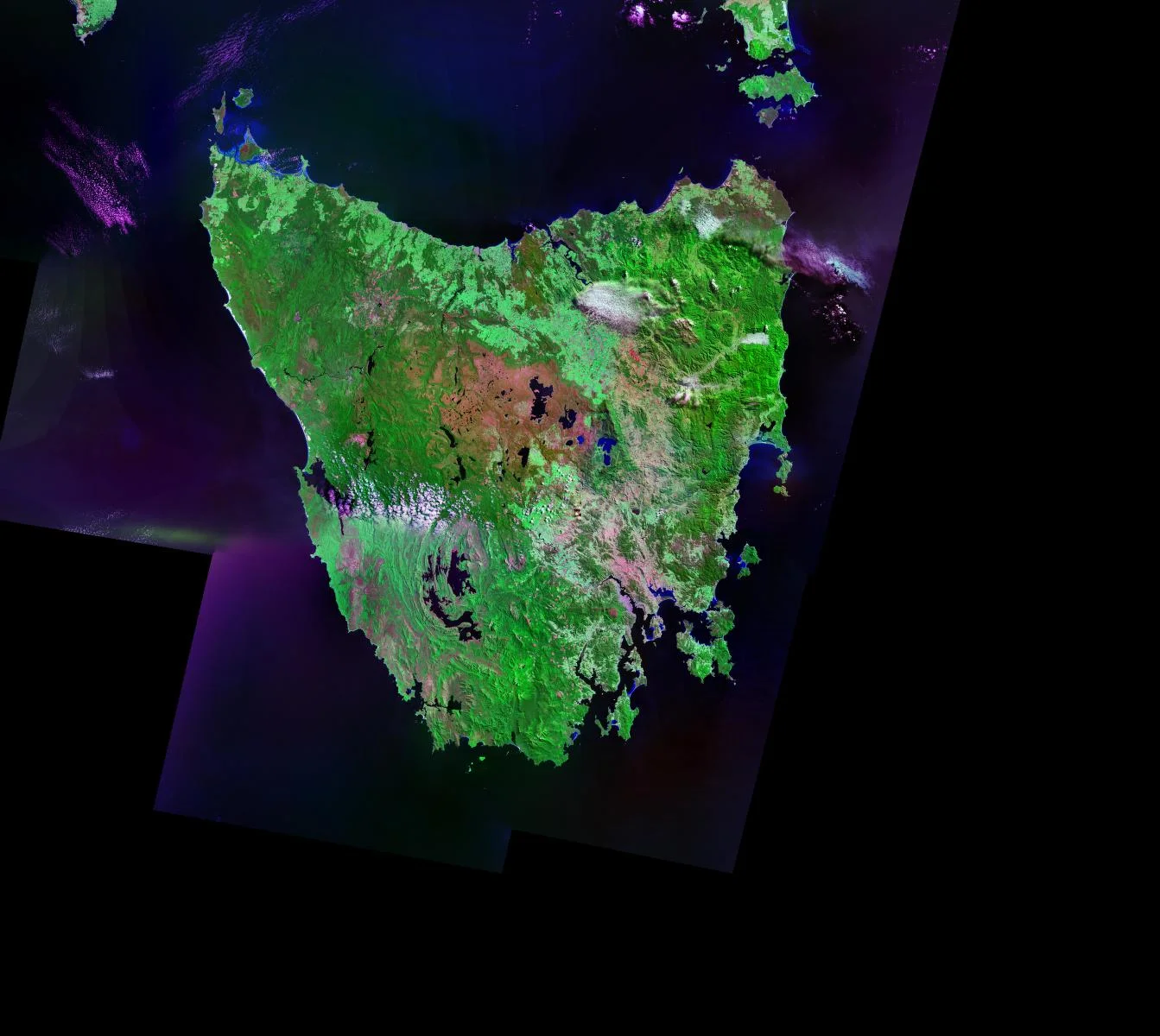

Two hundred kilometers of water. Ten thousand years of isolation. Enough to erase fire-making, bone tools, and cold-weather clothing from an entire civilization’s memory. (NASA)

Joseph Henrich’s most devastating case study is Tasmania.

When rising sea levels isolated the island roughly 10,000 years ago, its population of about 4,000 gradually lost complex technologies their ancestors had possessed: bone tools, fishhooks, cold-weather clothing, the ability to start fire from scratch. Not because they became less intelligent. Because there weren’t enough of them. With too few skilled practitioners to compensate for the inevitable errors in cultural transmission, knowledge eroded across generations. Population size predicts technological complexity across Oceanic societies, because larger groups sustain more channels of transmission, providing redundancy against loss.

In The Secret of Our Success (2015), Henrich argues that this is the fundamental fact about our species: we are a “cultural species,” individually unremarkable, collectively extraordinary. Our secret is not individual intelligence but high-fidelity cultural transmission — the ability to learn from others, accumulate improvements across generations, and ratchet up complexity without any single person understanding the whole.

The collective brain has a physical substrate. André Leroi-Gourhan traced the full trajectory: muscular knowledge externalized into tools, memorial knowledge externalized into writing, reasoning knowledge externalized into computation. Kim Sterelny calls this cognitive niche construction: each generation inherits a world of compressed knowledge that scaffolds the intelligence of the next.

But the ratchet can slip in either direction. Knowledge can grow — or it can vanish. The question that determines a civilization’s fate is: what keeps the ratchet turning?

1750: the loop

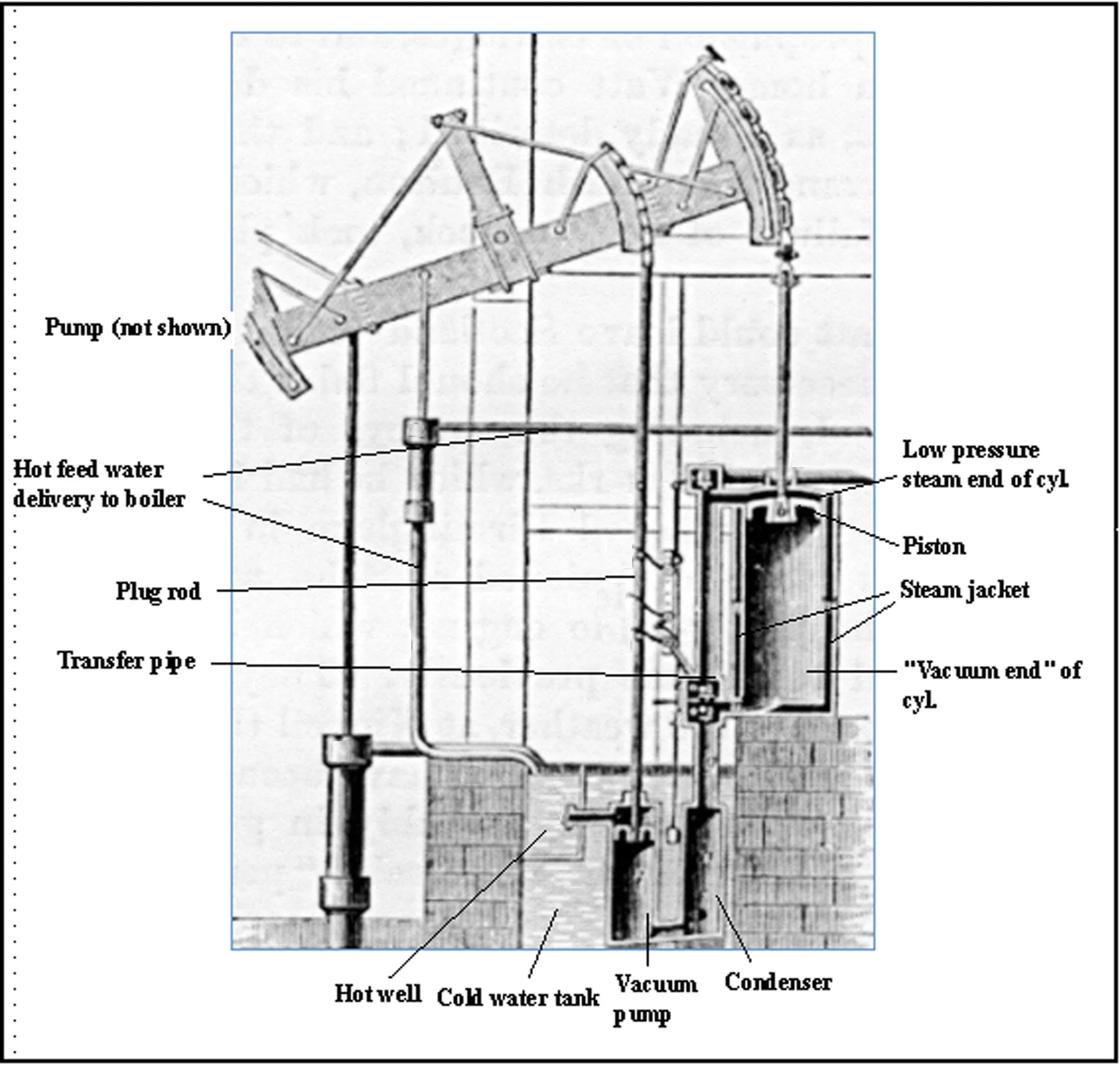

Knowledge made transmissible: a technical drawing that carries propositional and prescriptive knowledge simultaneously. The engine improved because someone understood atmospheric pressure; the drawing ensured that understanding survived its creator. (Wikimedia Commons)

Joel Mokyr, awarded the 2025 Nobel Prize in Economics, spent his career on this question. His framework distinguishes two types of useful knowledge. Propositional knowledge: understanding of natural phenomena, why things work. Prescriptive knowledge: technique, how to do things. Every technique rests on what Mokyr calls an “epistemic base” of propositional knowledge.

Before 1750, almost all technology was prescriptive with a thin or nonexistent epistemic base. Blacksmiths forged steel without understanding metallurgy. Farmers rotated crops without knowing soil chemistry. Progress was possible, but slow, accidental, and subject to diminishing returns — because without understanding why a technique worked, there was no systematic way to improve it.

Then something unprecedented happened. Mokyr calls it the “Industrial Enlightenment”: roughly 1700 to 1800, propositional and prescriptive knowledge began feeding each other in a positive loop. The steam engine improved because of insights into atmospheric pressure. Steel advanced through understanding carbon content. Understanding generated technique. Technique raised new questions. Questions deepened understanding. The loop turned, and turned, and didn’t stop.

Every previous civilization that produced innovation bursts (Song China, Abbasid caliphate, ancient Rome) eventually stalled. Only Europe’s burst became self-sustaining. Why? Mokyr’s answer: political fragmentation combined with cultural unity. Dozens of rival states competed for talent. Heterodox thinkers could flee to another jurisdiction. But they all participated in a shared infrastructure — the Republic of Letters — that rewarded discovery and freely circulated ideas. Knowledge couldn’t be killed because it had too many hosts.

Paul Romer’s endogenous growth theory (Nobel Prize 2018) formalized the consequence: ideas are non-rival goods. Using an idea doesn’t diminish it. With increasing returns from non-rival knowledge, the feedback loop should generate unbounded growth.

Should. But does it?

Now: the ceiling

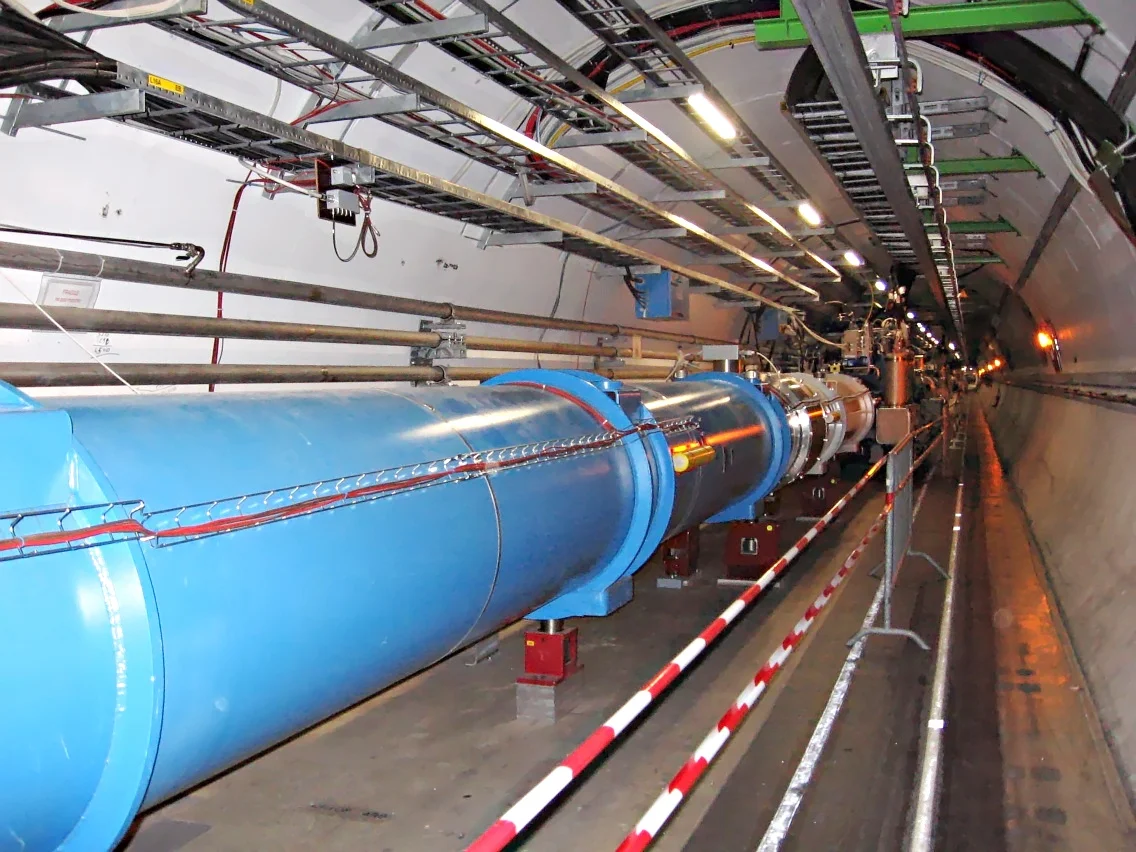

From a single engine drawing to a 27-kilometer particle accelerator staffed by thousands. The visual distance between these two images is Bloom et al.’s thesis: ideas are getting harder to find. (CERN)

In 2020, Bloom, Jones, Van Reenen, and Webb showed that “ideas are getting harder to find.” The number of researchers needed to sustain Moore’s Law has grown more than 18-fold since the early 1970s. Agricultural crop yields require ever-larger research teams for the same percentage improvement. The economy must roughly double its research efforts every 13 years just to maintain the same growth rate.

Ben Jones documented the mechanism: the burden of knowledge. As science advances, researchers need longer training, start productive work later, specialize into narrower niches, and require bigger teams. The frontier is not just moving — it is receding faster than we can educate people to reach it. Every advance makes the next advance harder.

César Hidalgo (Why Information Grows, 2015) frames this physically: economic growth is “crystallized imagination,” knowledge embodied in products. An iPhone is valuable because of the knowledge embedded in the arrangement of its atoms. But the configurations are getting harder to achieve. The low-hanging knowledge has been harvested. What remains requires more minds, more time, more coordination.

This is the race: Romer’s non-rivalry (each idea makes the next slightly more possible) versus the burden of knowledge (each advance makes the next harder to reach). For most of history, the feedback loop won. Now the race is tightening.

Is there a way to break it open?

Next: the new weapon

Two recent works frame the answer in explicitly thermodynamic terms, completing the circle back to Schrödinger.

Chapter 6 of The Last Economy argues that every act of economic value creation is an act of sorting — an entrepreneur functioning as Maxwell’s Demon, creating pockets of low entropy from market chaos. Biological intelligence runs this sorting at roughly 20 watts within a fixed cognitive architecture. Silicon intelligence is uncapped: limited only by energy input, subject to recursive self-improvement, and functionally immortal in the sense that knowledge need not die with its creator.

Ivan Zhao frames this with poetic precision: the universe is cold and doesn’t care. Life’s project is to impose its values on an indifferent cosmos by organizing energy and matter. Every evolutionary advance — symbiosis, multicellularity, nervous systems, language, science — is a new trick in life’s alliance against entropy. Tools are non-biological extensions of this alliance. If knowledge is information that causes itself to remain physically instantiated, then every technology is knowledge recruiting matter into the project of its own perpetuation.

The 2025 Nobel committee suggested that AI could “reinforce the feedback between propositional and prescriptive knowledge” — Mokyr’s loop, supercharged. The 2024 Nobel Prize in Chemistry went to Demis Hassabis and John Jumper for AI-driven protein structure prediction — AI entering frontier knowledge creation, not merely knowledge application.

The thing that fights the dark

Step back and see the full arc.

3.5 billion years ago, a molecule began copying itself, and knowledge entered the universe as accumulated information about environments, encoded in chemistry. Billions of years later, a species began copying ideas, and knowledge leapt from genes to culture — fragile, dependent on network size, but incomparably faster. Three centuries ago, understanding and technique locked into a feedback loop, and knowledge began compounding. Now that loop is straining against the limits of biological minds, and something new is being built to carry it forward.

What is knowledge? It is neg-entropic structure with causal power: a physical configuration of matter that creates further configurations that would be astronomically improbable without it. A smartphone. A vaccine. A genome. A mathematical proof. A city. Each is a pocket of extraordinary order maintained against entropy by the knowledge embedded within it.

Schrödinger saw this in the organism. Wiener saw it in the message. Popper saw it in the theory. Henrich saw it in the culture. Mokyr saw it in the institution. Romer saw it in the economy. Deutsch saw it in the structure of physical law itself.

They were all looking at the same thing.

The universe tends toward disorder. Knowledge is the thing that fights back. It has been fighting back for 3.5 billion years — first through chemistry, then through biology, then through culture, then through science. The question that now confronts us is what kind of system can wield this force: how it compresses experience into reusable patterns, retrieves them at the right moment, and activates them in the world.

That question is the subject of what follows.

For the deepest treatment of knowledge’s role in physics, start with Deutsch’s The Beginning of Infinity. For how knowledge drives civilization, Mokyr’s The Gifts of Athena and Henrich’s The Secret of Our Success are essential. For the thermodynamic framing, see Chapter 6 of The Last Economy. For the poetic version, read Ivan Zhao’s On Universe, Life, and AI.