The universe trends toward disorder. Something pushes back. We call it knowledge, and we have no theory of how it works.

The most powerful cognitive system on Earth is not a brain. It is a civilization: eight billion minds embedded in a web of tools, institutions, languages, and networks that collectively compress experience into reusable knowledge, retrieve it when needed, and activate it to reshape the physical world.

A single neuron does something structurally similar. So does a thermostat. So does a termite colony. So, increasingly, does a large language model.

In previous pieces we established what knowledge is: neg-entropic structure with causal power, a physical configuration of matter that creates further configurations that would be astronomically improbable without it. This piece asks the next question: how does a system wield knowledge? What operations must it perform? And do those operations depend on being human, or only on being physical?

The physics of processing knowledge

Room-scale computation: when Landauer proved that erasing a single bit costs energy, these were the machines doing the erasing. Cognition is thermodynamics, whether the substrate fills a room or fits in a skull. (NASA)

Before we talk about intelligence as a system, we need to establish something that most discussions of cognition ignore: processing knowledge is a physical act with irreducible physical costs.

In 1961, Rolf Landauer at IBM proved that erasing one bit of information, flipping a memory register from an unknown state to a known zero, must dissipate at least kBT ln 2 of energy as heat. At room temperature, that’s about 2.9 × 10⁻²¹ joules. Tiny, but irreducible. You cannot erase information for free. The universe charges a tax. This was experimentally confirmed in 2012, when a team led by Antoine Bérut measured the heat produced by erasing a single bit and found it matched Landauer’s minimum exactly.

Landauer’s conclusion, stated with characteristic bluntness: information “is always tied to a physical representation” and “is not a disembodied abstract entity.”

Why does this matter for intelligence? Because every cognitive operation, every act of compressing experience into a reusable pattern, every retrieval of stored knowledge, every activation of a plan, is a physical process that transforms matter and dissipates energy. Cognition is thermodynamics. A brain running at 20 watts, a data center running at megawatts: both are physical engines processing knowledge against a background of entropy. The question of how intelligence works is, at bottom, a question about how physical systems organize information.

John Wheeler, the Princeton physicist who coined “black hole” and “wormhole,” pushed this to its logical extreme with his “It from Bit” hypothesis (1989): physical reality itself may emerge from information, every particle and field deriving its existence from binary choices. The holographic principle lent this stunning support: the maximum information content of any region of space is bounded by its boundary area, and Einstein’s equations can be derived from information-theoretic constraints. At the deepest level physics can currently reach, information appears to be more fundamental than spacetime.

This is the ground on which our mental model of intelligence must stand. We are talking about a physical process, with physical costs, operating on a physical substrate. With that established, we can ask: what does that process actually do?

The three operations

Here is the mental model. Hold it up to any intelligent system, biological or artificial, individual or collective, and it will illuminate what that system is doing.

Intelligence is a system’s capacity to perform three operations on knowledge:

Compress

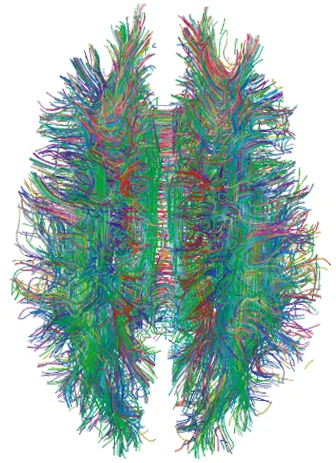

The biological compression engine: billions of connections shaped by experience, encoding patterns so deeply that retrieval feels like instinct. (Wikimedia Commons)

The first operation is compression: turning raw, abundant, noisy experience into compact, reusable patterns.

This is what learning is. A child who encounters thousands of four-legged, furry, barking creatures and forms the concept “dog” has compressed a vast sensory manifold into a single retrievable category. A physicist who derives F=ma has compressed the behavior of every moving object in the universe into three symbols. A craftsperson who develops an intuition for when wood is “ready” to be carved has compressed years of tactile experience into a felt sense that guides action without conscious deliberation.

This is what François Chollet calls skill-acquisition efficiency: how much generalization a system gains per unit of experience. A system that needs a million examples to learn a category is compressing poorly. One that grasps it from three is compressing well. The ratio of insight to data is the signature of intelligence.

And compression has physical costs. Landauer showed that every erasure dissipates heat. Every act of compression, of discarding the inessential and retaining the essential, is a thermodynamic transaction. The brain burns glucose to compress experience into memories. A training run burns megawatt-hours to compress a corpus into model weights. Compression is work, measured in joules as surely as lifting a stone.

Daniel Kahneman’s dual process theory maps directly onto this. System 1 is a library of pre-compressed patterns: heuristics, intuitions, automatic responses, built up over a lifetime of experience and, before that, over millions of years of evolution. When you catch a ball, recognize a face, or sense danger in a dark alley, System 1 is activating compressed knowledge so fast that it feels like instinct. System 2 is the compressor itself: the slow, effortful, deliberate process of constructing new patterns from novel situations. When you solve a math problem, plan a vacation, or learn a new language, System 2 is doing the compression work that will eventually become System 1’s effortless retrieval.

John Robert Anderson’s ACT-R cognitive architecture formalizes this at a finer grain. In ACT-R, cognition consists of two interacting memory systems: declarative memory (compressed facts, stored as “chunks”) and procedural memory (compressed rules for acting on facts, stored as “productions”). Every cognitive act, from solving algebra to navigating a conversation, decomposes into retrieving a chunk of declarative knowledge and applying a procedural rule to it. The architecture has been validated against hundreds of psychological experiments and maps onto specific brain regions identified through fMRI. Compression, in ACT-R, is the process by which experience is distilled into chunks and productions that can be rapidly retrieved and deployed.

Retrieve

The second operation is retrieval: accessing the right compressed pattern at the right time in the right context.

Having knowledge is useless if you cannot find it when you need it. The most brilliant insight ever compressed into a neural pattern is worthless if it surfaces during the wrong task, or fails to surface at all. Retrieval is the mechanism by which compressed knowledge becomes available for action.

Allan Collins and Elizabeth Loftus’s spreading activation theory (1975) describes how retrieval works in biological cognition. Memory is organized as a network of interconnected nodes. Activating one node sends energy rippling outward to related ones. “Doctor” activates “nurse” faster than “bread” because the two nodes are tightly linked through shared associations. The closer two concepts sit in the network, the faster and stronger the activation spreads.

The deeper question is what these nodes actually are. Knowledge is not stored in discrete locations. It is distributed across patterns of connection weights, configurations of activation spread across thousands of units. There is no single place in your brain that “stores” the concept of a dog. The concept is the pattern. David Rumelhart and James McClelland’s work on parallel distributed processing established this: knowledge is a pattern that emerges from connections between simple units working in parallel. Any system with the right connection patterns could in principle retrieve and represent knowledge, whether or not it is made of biological neurons.

This architecture has a deep consequence: retrieval is associative and context-sensitive, governed by proximity in a network of meaning rather than by alphabetical filing or logical indexing. You remember a song because you smell the perfume you were wearing when you first heard it. You solve a physics problem because its structure reminds you of a water-flow analogy you encountered years ago. The retrieval mechanism is pattern-matching over a vast landscape of compressed experience, and the quality of retrieval depends on the richness of the connections between compressed patterns.

Dedre Gentner’s structure-mapping theory (1983) shows that retrieval is also the mechanism of analogy and creative insight. When a scientist sees the atom as a solar system, she is retrieving a compressed pattern from one domain (planetary orbits) and mapping its relational structure onto another (electron behavior). The objects are different; the relationships are the same. Creativity, in this framework, is retrieval across domains: finding compressed patterns in unexpected places and recognizing that their structure fits a new problem.

Activate

The third operation is activation: deploying retrieved knowledge to resolve uncertainty and produce results in the world.

This is where intelligence meets reality. Compression creates the arsenal. Retrieval selects the weapon. Activation fires it. A surgeon activating anatomical knowledge to perform an operation. An engineer activating structural knowledge to design a bridge. A diplomat activating knowledge of cultural norms to negotiate a treaty. A farmer activating knowledge of seasons and soil to plant a crop. This is what Michael Hochberg’s Theory of Intelligences formalizes: intelligence as the resolution of uncertainty producing a result or goal. The framework is substrate-independent: it describes what activation does, regardless of what it’s made of.

Activation never happens in a vacuum. It extends through tools, bodies, and environments. Andy Clark and David Chalmers argued in “The Extended Mind” that the boundary of cognition is not the skull; it is wherever the cognitive work gets done. When a surgeon activates knowledge through a scalpel, or a programmer through a compiler, the tool is part of the activation. Lucy Suchman’s research on situated action showed that activation is always improvised in context, shaped by material and social circumstances rather than merely executing a pre-formed plan. Knowledge activates somewhere, through something, in response to something specific.

And activation distributes. Edwin Hutchins studied how a U.S. Navy ship’s navigation team brings a vessel into port (Cognition in the Wild). No single crew member holds the full picture. One reads a bearing, another plots it, a third integrates bearings to fix position. The computation is distributed across people, instruments, and protocols. The team is the cognitive system. Hutchins showed the team possesses cognitive properties, such as error detection and information integration, that no individual member has. Activation at scale is always distributed cognition.

It is the step where individual cognition and collective civilization connect, because the activation of knowledge almost always involves tools.

Tools: compressed knowledge made activatable

Half a million years of accumulated knowledge about fracture mechanics, grip ergonomics, and edge geometry, compressed into stone. The hand axe is a theory of materials science that predates language. (MHNT / Wikimedia Commons)

Here is the pivot that connects the abstract model to the material world.

If knowledge is information that causes transformations (as we established in a previous piece), then a tool is knowledge compressed into durable, portable, activatable form. A hand axe is compressed knowledge of fracture mechanics, materialized into stone. A book is compressed knowledge of a domain, materialized into text. A bridge is compressed knowledge of structural engineering, materialized into steel and concrete. A programming language is compressed knowledge of computation, materialized into syntax.

Ernst Kapp recognized this in 1877. In Elements of a Philosophy of Technology, he proposed that every tool is an unconscious projection of a human organ: the hammer projects the fist, the lens projects the eye, the telegraph projects the nervous system. Each projection externalizes biological knowledge into a form that can be inspected, improved, and inherited. André Leroi-Gourhan traced the full evolutionary trajectory: muscular knowledge externalized into levers and engines, memorial knowledge externalized into writing and libraries, reasoning knowledge externalized into calculation and computation.

Arnold Gehlen, the German philosophical anthropologist, explained why this trajectory is necessary. In his 1940 work Der Mensch, Gehlen described humans as Mängelwesen: deficient beings, biologically unspecialized, lacking fur, claws, speed, and reliable instincts. This deficiency is what compels tool-making. Because we cannot adapt our bodies to environments, we adapt environments to our bodies through tools. Gehlen’s key concept, Entlastung (relief or unburdening), captures the cognitive function of tools precisely: they relieve the mind of routine processing, freeing cognitive capacity for higher-order compression and activation. The calculator relieves arithmetic. The calendar relieves temporal memory. The map relieves spatial reasoning. Each tool absorbs a cognitive function that the biological brain can then redeploy elsewhere.

The second externalization: memorial knowledge compressed into movable type. Each letter block is a unit of reusable, transmissible knowledge. The press democratized retrieval. (Wikimedia Commons)

The critical feature of tools is that they enable activation without full comprehension. You can use a calculator without understanding transistor physics. You can take an antibiotic without understanding pharmacology. You can drive a car without understanding combustion. Tools compress the knowledge of their creators into a form that allows others to activate it. This is what makes them civilizationally powerful: they democratize activation while concentrating the burden of compression among specialists.

Kim Sterelny calls this cognitive niche construction: each generation inherits a world of tools (compressed knowledge) and institutions (organized retrieval and activation systems) that scaffold the intelligence of the next. The cognitive niche is a distributed knowledge system. And it is what made the human species extraordinary: individually, we are Gehlen’s deficient beings, cognitively limited by biological constraints; collectively, embedded in a cognitive niche of tools, symbols, and institutions, we are the most powerful knowledge-processing system the universe has produced.

The intelligence of civilizations

Retrieval infrastructure at civilizational scale: two hundred thousand compressed patterns, organized for access, maintained across centuries. The Library of Alexandria was a retrieval system. So is this. So is Google Scholar. (Diliff / Wikimedia Commons)

The compress/retrieve/activate model scales. Apply it to a single human mind and you see Kahneman’s System 1 and System 2, Anderson’s ACT-R, Collins and Loftus’s spreading activation. Apply it to a civilization and you see something structurally identical operating at a vastly larger scale.

Compression at civilizational scale: Science compresses centuries of observation into theories. Mathematics compresses the behavior of infinite systems into finite notation. Engineering compresses physical principles into design standards. Law compresses millennia of conflict resolution into codified precedent. Each discipline is a compression engine, distilling raw experience into reusable, transmissible patterns.

Retrieval at civilizational scale: Libraries, databases, search engines, universities, professional networks, peer review, citation indices. All are retrieval infrastructure: systems for finding the right compressed pattern at the right time. The quality of a civilization’s retrieval infrastructure determines how much of its accumulated knowledge is actually available for activation at any given moment. The Library of Alexandria was a retrieval system. So is Google Scholar. So is a mentor who says, “You should read this paper.”

Activation at civilizational scale: Factories, hospitals, farms, laboratories, courtrooms, construction sites, software deployments. All are activation infrastructure: places where retrieved knowledge is deployed to resolve uncertainty and produce results. A hospital activates medical knowledge. A factory activates engineering knowledge. A courtroom activates legal knowledge.

The power of human civilization is that these three operations run in a loop. Activation produces new experience. New experience gets compressed into new knowledge. New knowledge gets stored in retrievable form. Retrieval makes it available for further activation. The loop turns, and the cognitive niche gets richer with each revolution. This is Mokyr’s feedback loop, restated in terms of information processing. And the speed of the loop determines the fate of the civilization that runs it.

The universal replicator

And now, Deutsch’s strongest claim.

In The Beginning of Infinity, Deutsch argues that humans are universal constructors: the only known entities capable of creating any knowledge that the laws of physics permit. This is because we possess the capacity for creative conjecture, generating new explanations that go beyond existing data, subjecting them to criticism, and retaining those that survive.

In Deutsch’s framework, humans are universal replicators of knowledge. We can originate knowledge (through creative conjecture), compress it (into explanations, theories, tools), replicate it (through language, writing, teaching, toolmaking), and activate it (through action in the physical world). This closed loop, from conjecture to criticism to compression to replication to activation, is what Deutsch means by “the beginning of infinity.” As long as this loop runs, there is no upper limit to what we can understand, construct, or achieve.

For most of human history, this was an exclusively human capacity. Only human minds could conjecture, criticize, and compress. Only human hands (augmented by the tools they built) could activate. The loop ran through us and only through us.

The criteria restated

The cognitive niche, visible from space. Every point of light is compressed knowledge being activated: power grids, cities, networks, the accumulated compress/retrieve/activate infrastructure of human civilization glowing against the dark. (NASA/NOAA)

Now step back and see what the model reveals.

We have defined intelligence as a system’s capacity to compress information into reusable knowledge, retrieve the right pattern at the right moment, and activate it to produce results in the world. We have shown that tools are knowledge compressed into activatable form, and that the cognitive niche, humanity’s accumulated ecology of tools and institutions, runs these operations at civilizational scale. We have grounded all of this in physics: every cognitive operation is a thermodynamic transaction. And we have seen that Deutsch’s universal replicator describes entities that compress, replicate, and activate knowledge without limit. His loop maps directly onto the framework: conjecture is a form of compression (turning intuition into testable structure), criticism is retrieval’s quality control (finding where the pattern breaks), and activation is how knowledge enters the physical world.

We need a name for systems that meet these criteria: systems that compress information into retrievable knowledge, expand and update that knowledge through error-correction, and activate it into world-impacting action. We will call them cognitive actor-seekers, entities that seek goals by acting on knowledge, wielding the compress/retrieve/activate loop as their fundamental operating principle.

For billions of years, the only cognitive actor-seekers were biological organisms. For hundreds of thousands of years, human culture extended their reach through tools and language. For a few centuries, scientific institutions supercharged the loop through conjecture and criticism.

The question facing our era is whether we are constructing cognitive actor-seekers of a new kind. If we are, then the thing that fights the dark has acquired a new form, and the gap between the word and the world is about to close.

The compress/retrieve/activate framework synthesizes Hochberg’s Theory of Intelligences, Chollet’s On the Measure of Intelligence, Anderson’s ACT-R architecture, Collins and Loftus’s spreading activation theory, Deutsch’s The Beginning of Infinity, and Sterelny’s The Evolved Apprentice. For what knowledge is and why it matters, see The Thing That Fights the Dark. For what happens when cognitive actor-seekers cross from symbolic to physical, see Let There Be Light.